- added a lot of unnecessary weight

- required the use of a very large and dangerous heatsink — the LM7805 could handle 1.5A and our system used up to 2A

- required us to fiddle with two power supplies to when powering and shutting down the device

- AA batteries are expensive for the amount of time they lasted (approximately 30 mins)

So motivated by these issues, we decided to switch to a slim 2000mAh, 3 cell LiPo battery that fits within the profile of the stripped down Kinect.

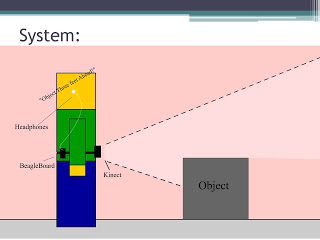

A 2000mAh, 3 cell LiPo battery delivers 11.V (3 * 3.7V) and can run at 2A continuously for an hour. Since our system averages less than 2A, it will last for over an hour on a single charge. Also, from our previous tests, we have verified that the Kinect — which is rated to work at 12V — will function as low as 8.5V. Not everything is perfect, however. We still need a voltage regulator to get 5V for the BeagleBoard and LiPo batteries require much more care to ensure they do not get overcharged or over-depleted.

For our new voltage regulator, we are using a more robust component that can take from 6-23V and output 5V at a maximum of 3A. This will allow us to eliminate the heatsink and use power directly from the LiPo instead of having a second power supply.

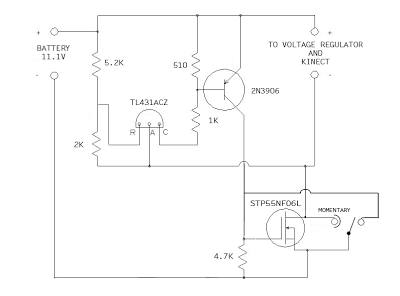

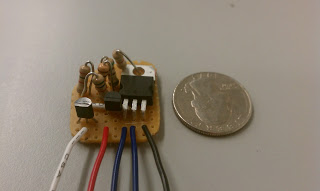

The final circuit we need for this system to work is a voltage cutoff circuit. Since LiPo cells lose their ability to accept a full charge if they are discharged beyond 3.0V, we need a circuit that cuts off power to the system when the 3 cell LiPo discharges to 9.0V (3 * 3.0V). Since this is the absolute limit, we built our circuit, with the help of Dan B., to cutoff at 9.5V. Dan’s circuit is operated by a single momentary switch that turns the device on, but no switch to turn it off. With our modifications, we will be able to operate the belt with a single (ON)-OFF switch — ‘ON’ in parenthesis indicates that the switch is momentary ON, static OFF. The circuit is shown below in schematic and PCB form:

Below is a video demonstrating proper function of these components, using a power supply instead of a LiPo to show the voltage cutoff.

PARTS LIST:

Battery

- 2000mah 3S LiPo battery 15-25C — LiPo battery

- Thunder-AC6-Charger-Power — charging station

- LiPo guard charging bag — safety charging bag

- Deans Ultra Connector Set — charging leads connectors

Voltage Regulator

- 5VDC Voltage Regulator (BEC), Switch-Mode Type — 5V voltage regulator

Voltage Cutoff

- STP55NF06L — power transistor

- TL431ACZ — shunt regulator

- 2N3906 — bipolar junction transistor, pnp

- TIGC5B-6S-BL-NBL — switch option 1

- SRN91-15BB — switch option 2